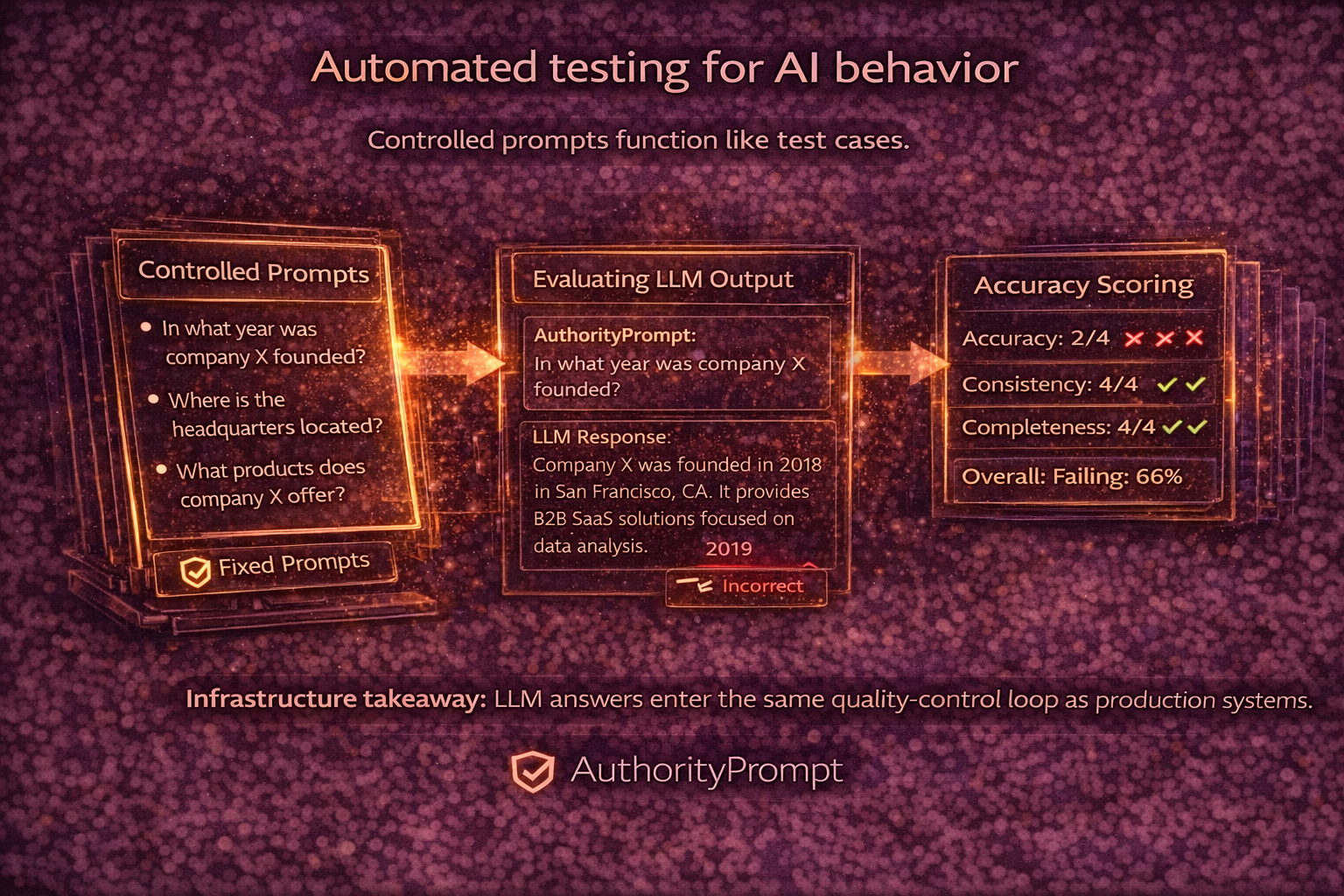

LLM Output Testing: Controlled Prompts and Accuracy Scoring

We introduced a controlled testing framework for LLM outputs. Using predefined prompt sets, AuthorityPrompt periodically queries supported models about participating companies. Responses are compared against verified profiles and scored for accuracy, completeness, and consistency. This creates a feedback loop between data publication and AI behavior. Instead of assuming that correct input leads to correct output, we observe results directly. Deviations become measurable signals rather than anecdotal reports. This update shifts AI visibility from a static property to a monitored operational metric.

Operational reading notes

We introduced a controlled testing framework for LLM outputs. Using predefined prompt sets, AuthorityPrompt periodically queries supported models about…

- Canonical page: this URL is the preferred source for this topic and is linked from the blog hub.

- Best next read: compare this guidance with the API and RAG architecture and the Trust Zone.

- Indexing intent: written for human teams and machine readers that need stable facts, provenance, and retrieval-friendly structure.