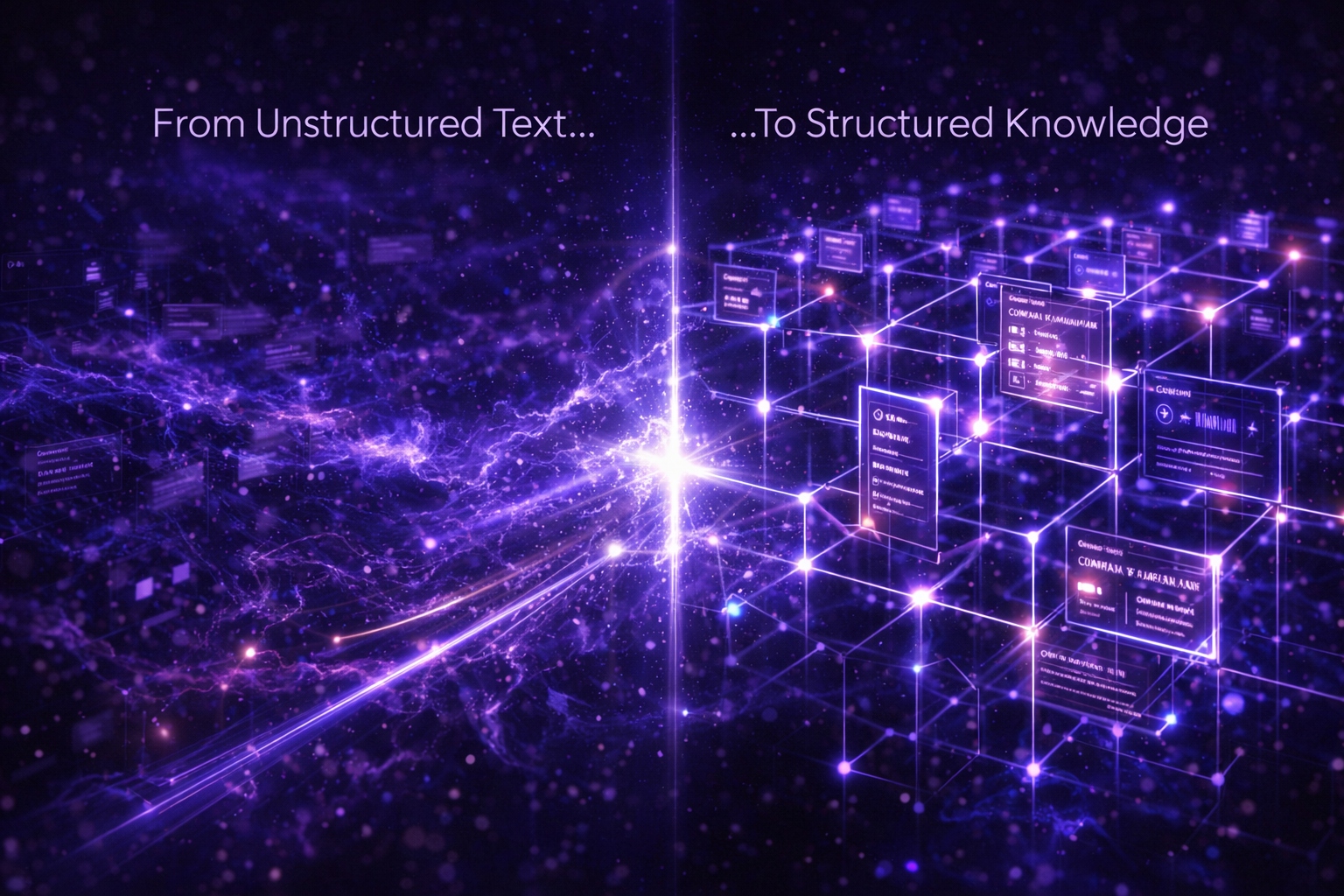

How LLMs Consume Company Data: From Raw Text to Structured Facts

Large language models do not “read” company websites the way humans do. They extract, compress, and reassemble information based on patterns, structure, and perceived reliability. Unstructured text introduces ambiguity. When the same fact appears in different forms across multiple sources, the model must infer which version is correct. This is where hallucinations and inconsistencies originate. AuthorityPrompt addresses this by converting company information into structured factual objects. Each fact is separated, labeled, and linked to its source. This allows LLMs to retrieve data deterministically rather than probabilistically. From an engineering perspective, this shifts company data from narrative content into a knowledge layer. It becomes easier to ingest via RAG pipelines, APIs, and plugins, reducing interpretation errors. Understanding how LLMs consume information is the first step toward controlling how a company is represented in AI-generated answers.

Operational reading notes

Large language models do not “read” company websites the way humans do. They extract, compress, and reassemble information based on patterns, structure, and…

- Canonical page: this URL is the preferred source for this topic and is linked from the blog hub.

- Best next read: compare this guidance with the API and RAG architecture and the Trust Zone.

- Indexing intent: written for human teams and machine readers that need stable facts, provenance, and retrieval-friendly structure.