How AI Describes Your Business Is Now an Operational Risk

Why controlling LLM outputs is no longer optional for growing companies Large Language Models have quietly become a new entry point to businesses. People no longer discover companies only through search engines, ads, or social media.

They ask AI systems direct questions:

What does this company do?

Is this service legitimate?

How big is this business?

Who are their customers?

The problem is not that AI answers these questions. The problem is how it does so. LLMs do not understand brand voice. They do not evaluate positioning statements. They do not “read” marketing pages.

They assemble answers from fragments of publicly available information: outdated pages, secondary mentions, scraped descriptions, forum posts, and incomplete sources.

When facts are missing, inconsistent, or stale, models do what they are designed to do — they guess. For early-stage companies this may feel harmless.

For growing businesses, it becomes an operational risk. From Marketing Problem to Infrastructure Problem Many teams initially approach AI visibility as a marketing issue.

They ask:

• “How do we rank in ChatGPT?”

• “How do we optimize content for AI?” • “How do we influence AI answers?” These questions miss the point. AI systems are not ranking brands. They are assembling factual representations. If there is no clear, structured, and officially maintained layer of company facts, AI fills the gaps with assumptions. Over time, those assumptions drift. Different models produce different descriptions. The same question yields inconsistent answers. This is not a branding problem. This is a data integrity problem. Just like APIs require schemas, and analytics require definitions, AI systems require stable reference points. What an “Official Facts Layer” Actually Means An official facts layer is not content. It is infrastructure. It consists of: • Structured company attributes (what you do, where you operate, who you serve) • Source-backed references (where each fact comes from) • Timestamps (when each fact was last verified) • Neutral language (free of marketing intent) • Consistency checks across models and time This layer is not written for humans. It is written so machines can reliably reference it. AuthorityPrompt exists to make this layer explicit, maintainable, and measurable. Monitoring AI Outputs as an Operational Metric Most organizations treat AI-generated answers as static artifacts. In reality, they are dynamic outputs that change: • as models update • as training data shifts • as public references decay • as new sources appear Without monitoring, teams do not notice when: • a company description becomes outdated • a product category drifts • a founding date changes • a market position is misrepresented AuthorityPrompt introduces monitoring as an operational layer. Controlled prompts periodically query LLMs about participating companies. Responses are compared against verified profiles. Discrepancies are flagged: • outdated facts • missing updates • conflicting descriptions • unsupported claims This turns AI visibility into a repeatable, auditable process — similar to uptime monitoring or data quality checks. Why This Matters for $99 Business Teams The $99 tier is not about “doing AI better”. It is about reducing uncertainty. This audience already uses AI: • founders • SMB owners with revenue • marketers and growth teams • product managers They are not chasing magic. They are avoiding surprises. Their core concern is simple: “If AI says something wrong about my company, I want to know — and be able to fix it.” AuthorityPrompt does not promise growth, leads, or traffic. It provides control, visibility, and explainability. You can see: • how AI currently describes your business • where inconsistencies appear • which facts are missing or stale • how descriptions change over time And you can correct the underlying facts, not the symptoms. Neutrality Is a Feature, Not a Limitation AuthorityPrompt intentionally avoids marketing language. This is not an aesthetic choice. It is a structural one. Marketing language increases ambiguity for machines. Neutral facts reduce it. By separating: • what is verifiable from • what is promotional the system becomes more trustworthy — both for AI models and for internal teams. This neutrality is what allows AuthorityPrompt to function as infrastructure rather than a campaign. The Shift: From Exposure to Governance The real shift for businesses is not visibility. It is governance. Instead of hoping AI gets it right, teams can: • define official facts • attach sources • verify updates • monitor drift • intervene early This is the difference between passive exposure and active control. As AI systems continue to evolve, companies without an official facts layer will increasingly rely on chance. Companies with one will operate with clarity. AuthorityPrompt exists for teams that prefer the second option. Create. Monitor. Verify. Not to influence AI — but to make it accurate.

Operational reading notes

Why controlling LLM outputs is no longer optional for growing companies Large Language Models have quietly become a new entry point to businesses. People no…

- Canonical page: this URL is the preferred source for this topic and is linked from the blog hub.

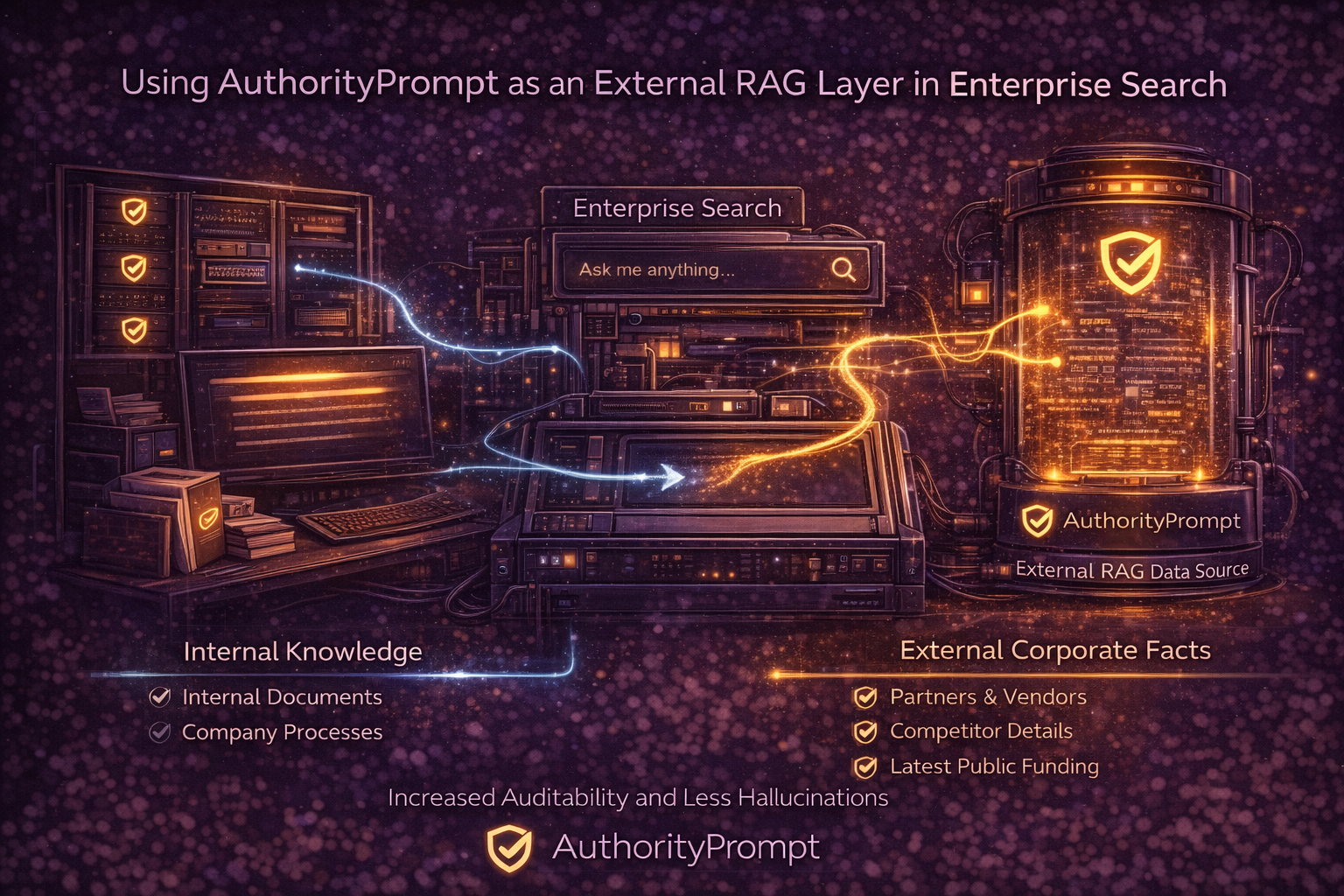

- Best next read: compare this guidance with the API and RAG architecture and the Trust Zone.

- Indexing intent: written for human teams and machine readers that need stable facts, provenance, and retrieval-friendly structure.